You can also customize the output using the configuration vars at the top of the file, but the defaults should work well for most people. That's it! You now have access to exporting rows as batches. Create a new file called "SQL Batch Multi-Line " (The double extension is important for syntax highlighting).Additionally, in most cases, the difference in performance is very minor between batched inserts and 1 large insert. Allowing normal queries to run at the same time as the set of batched queries. Inserting in batches gives you a significant performance boost, while still preventing tables or rows from getting locked for too long. This is particularly harmful in production environments, especially when you need to comply with an SLA. Table & row locks block all other queries that modify data (causing a desync) and even some queries that read data (causing request lag). One large insert is technically faster than batched inserts however, this often comes at the cost of locking tables/rows for extended periods of time. This makes the individual inserts very slow, while batches avoid almost all of this overhead. In SQL, each query is parsed and executed separately which causes significant overhead between each query. In short, better performance and limited table/row locks when inserting thousands, millions, or even billions of rows.īatches perform orders of magnitude faster than individual inserts. I will also take a moment to say this is my first post and would love any feedback on improving it. When I am running PL/SQL scripts in Datagrip I am getting task compiled but I cannot see the output how to get an output in datagrip, and when I am running dynamic block it's not taking input value in datagrip. While I could go on with my love/hate relationship with DG's features, (it's mostly love) it is fortunately easy enough to manually add some functionality. If it occurs you need use another port, for example 5433 in WSL that you can modify in nf in the line to mention the port 5432 by default.This isn't so much a "great new feature" as it is a missing feature from DataGrip.

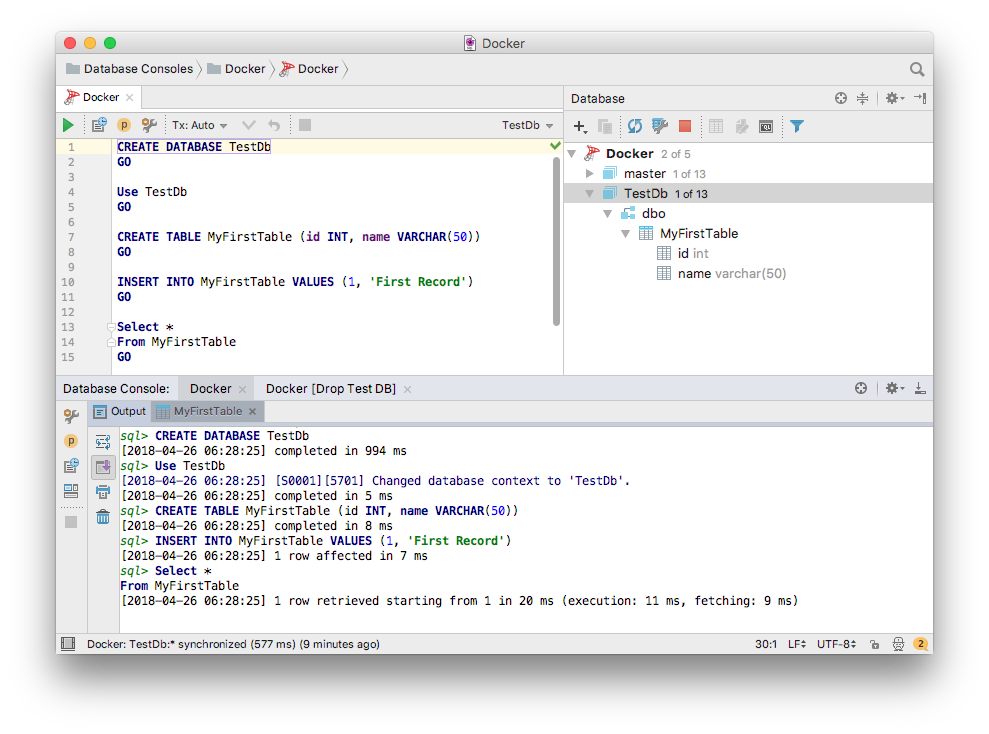

Any client capable of connecting to SQL Server will work with strongDM. strongDM is compatible with Datagrip and other IDEs. Datagrip is a simple and intuitive database IDE built to manage SQL Server and other popular databases. Save it and restart the service: sudo service postgresql restart.įinally, run netstat -t and copy the localaddress(not the foreign address), example: 172.17.125.13 BUT WITHOUT the port, because you will use the port of postgres database.įinally use the IP copied in datagrip with the info of database such as username, password, port and database.īe careful with the port of database that you use, because if you are running postgres on Windows the port 5342 is busy, and you get an error. Microsoft SQL Server & Datagrip - Microsoft SQL Server is a database server that supports multiple applications. Then open pg_hba.conf (that is located in the same path that contains nf) WITH sudo and the end of file add next lines to allow all connections (be careful): host all all 0.0.0.0/0 md5 ID This id will represent the id of the statement MySQL is going to execute in order. When developing I use it quite a bit to execute jobs from the agent. Now let's explain whats inside that table. One issue that I am having, and that is forcing me to keep SSMS open, is the SQL Server Agent. Particularly I decided to test it because of the multiple database type support.

To enable that open /etc/postgres/./nf and change the line: #listen_addreses = "" to listen_addreses = "*" (remove #, and put * symbol) and save it. I've been testing DataGrip as a SQL IDE recently and I really like it. To connect to datagrip you need enable connection by TCP/IP to postgres database.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed